In some years, AI went from something interesting to learn about in school to something used every day. Many students now use generative AI tools to create ideas, write code, translate texts, or edit pictures.

This change was rapidly noted by colleges. Instead of draconian bans, they revised the university AI policy to foster responsible use and safeguard academic honesty.

The end outcome is a new balance: the rules are less strict, but the checks are stronger.

Institutions now want students to keep records of their work, comply with data protection laws, and follow strict rules when using AI in schoolwork.

Important Things to Remember When Using Automated Tools This Semester

Before turning in any work, I tell my pupils to stop and go through a simple checklist.

This habit keeps your grades and your reputation as a student safe:

Reasons for University AI Policy Updates in 2026

The regulations colleges used in 2024 or 2025 no longer work. Back then, generative systems mostly made text.

Today, smart technologies can work with video, voice, code, pictures, and data analysis. Many of them work as powerful machine-based systems that can support programming, research, and design.

This change prompted instructors to rethink how students learn. Many schools now encourage people and technology to work together instead of forbidding these technologies.

University policy changed for several reasons:

- Technology became a part of work life. Every day, students who go to work will employ AI. Instead of telling students to stay away from these tools, universities want to encourage their responsible use.

- Capabilities expanded beyond mere text generation. Modern technology can write code, create presentations, analyze data sets, and set up study spaces. If used correctly, these possible benefits can help students learn more.

- It was easier to understand questions about morals and the law. Colleges need to consider ethics, such as how AI outputs may be biased, how to protect intellectual property rights, and how to safeguard private information.

Today, university rules are less about what you can’t do and more about what you should do.

The goal is simple: to help students use new technology responsibly and maintain academic honesty.

3 AI Policy Trends at Top Universities Around the World

Three principles keep coming up at top schools like Harvard, Oxford, and MIT. The same method is used in the Columbia University AI policy and other similar institutional rules.

I typically tell students that there are three main parts of modern technology policy in higher education.

Clear view

Students must tell when they employ instruments that make things. A lot of colleges now want a short statement that explains:

- Which system was used?

- What prompts were included in?

- How was the output changed, or how was it checked?

Privacy of data

Schools and colleges place great importance on data protection and information security. I also tell my pupils not to put personal information into public generative tools.

This is what I mean by sensitive materials:

- research resources from universities with protected health information;

- information that can be used to identify a person;

- private information from internships or labs.

Many universities have their own platforms that comply with legal and information security regulations.

Responsibility

The most crucial guideline is easy: students are still in charge of their schoolwork.

The student who turns in the work is still liable if an AI system makes mistakes in citations or analysis. These errors can result in breaches of academic integrity.

Automated tools can help students learn, but they can’t replace personal accountability.

The 20 Best Universities in the World and Their AI Policies in 2026

As generative tools become more common in schoolwork, schools around the world are changing their policies.

Every school has its own rules, but many follow the same basic principles to promote academic honesty and help students learn responsibly.

The following summary shows how the best colleges plan to use smart technologies in 2026.

| University | General AI approach |

|---|---|

| Harvard University | Harvard AI policy lets automated technologies be used as long as they are disclosed and approved by the teacher. |

| The Massachusetts Institute of Technology | Encourages responsible usage of AI experimentation |

| Oxford University | Transparency and academic integrity underscored |

| Stanford University | AI rules for each course |

| Cambridge University | Model for working together between humans and digital technologies |

| Yale University | AI is allowed for brainstorming and editing |

| Princeton University | AI must help students study |

| Columbia University | Required disclosure in a lot of courses |

| The University of Chicago | Very strict rules for academic honesty |

| Toronto University | AI is acceptable as long as it follows certain rules |

| Melbourne University | Framework for responsible usage |

| Singapore National University | A strong focus on keeping data safe |

| ETH Zurich | Stressed the need for research integrity |

| Tokyo University | Emphasizes ethical considerations |

| University of Amsterdam | Stresses ethical issues, AI literacy incorporated into education |

| University College London | Some courses require instructor authorization. |

| University of Sydney | Smart technologies accepted for drafting support |

| The University of Copenhagen | Updated rules for academic honesty |

| The University of Seoul | Rules about how to use things |

| University of Edinburgh | Proof of process approach |

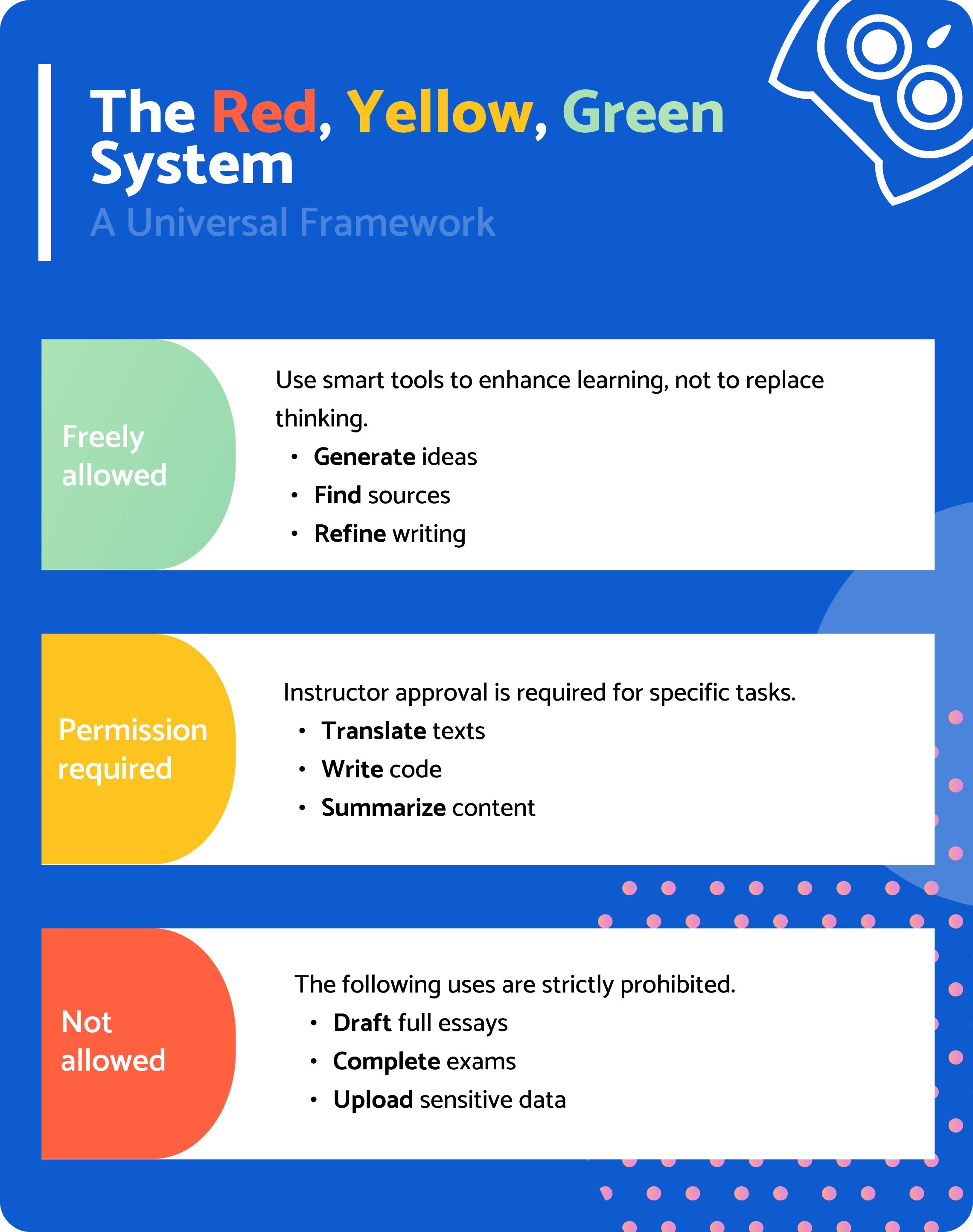

“Red-Yellow-Green” Structure for Using Tools Safely

Many teachers use a traffic light system to teach their students how to use AI.

✅ Green light: you can do it

Students can utilize smart tools to help them learn, but they can’t use them instead of thinking.

For example, coming up with topics for essays, getting better at grammar, making things clearer, finding sources for research, or summarizing what you’ve read.

This kind of AI utilization usually helps students do well.

⚠️ Yellow light: you need permission

Some tasks require the teacher’s permission because they could affect how well you learn.

These are some of them:

- translating assignments and producing computer code;

- making text shorter or changing its structure;

- making pictures or graphs of data.

The teacher might let students use these kinds of tools, but they usually have to explain how they did it.

🚫 Red light: not allowed

Some ways to employ generative technology frequently break university rules.

Examples:

- turning in whole essays written by AI;

- taking tests on their own;

- doing schoolwork without the students’ help;

- putting private university information into systems outside of the university.

These behaviors could be seen as cheating in school or using learning devices without permission.

How to Cite AI in 2026 (Updates for APA, MLA, and Chicago)

You should credit and cite correctly when using generative tools to write, analyze data, or create graphics.

Most institutions now want students to show when automated tools helped them write parts of their papers. University teaching AI policy news emphasizes the importance of openness and keeping good records.

The main guideline includes 2 easy-to-remember rules:

- Don’t think of the system as a person who wrote the book.

- Include who created the tool, what the tool is, which version it is, and sometimes the prompt that generated the output.

This will help readers understand how the information in your project was generated.

Here is a brief comparison of how the three citation styles use these references:

| FStyle | FThe main idea | FExample for text output | FExample for image or data |

|---|---|---|---|

| APA (7th edition) | Treat the system like software created by a developer. | OpenAI. (2025). Chat GPT (GPT-4o version) [Large language model]. https://chat.openai.com | OpenAI. (2025). DALL-E 3 image generated from prompt “a futuristic campus library” [Image generator]. https://labs.openai.com |

| MLA (9th edition) | Start the citation with the prompt that generated the output. | “Explain renewable energy policy in simple terms.” ChatGPT, GPT-4o version, OpenAI, 12 Feb. 2025, https://chat.openai.com | “A watercolor painting of a solar farm at sunset.” DALL-E 3, OpenAI, 14 Mar. 2025, https://labs.openai.com |

| Chicago (17th edition) | Usually cited in a footnote instead of the bibliography. | ChatGPT, response to “Explain renewable energy trends,” Mar. 12, 2025, OpenAI, https://chat.openai.com | DALL-E 3, image generated from prompt “future smart city design,” Apr. 5, 2025, OpenAI |

When you include generated images, datasets, or analyses in a project, briefly explain the process used to generate them. Mention the prompt you used and how you checked the results.

Some professors also ask students to include the full prompt or conversation transcript in an appendix.

Detection Tools vs Proof of Process in Verifying Student Work

Several years ago, universities relied heavily on AI detection software.

These automated tools promised to identify generated work, but following the Liberty University AI policy shows that process documentation and responsible use are now more important than detection alone.

Because of these problems, institutions began to move away from detection software as their primary verification method. Instead, they increasingly rely on something called “proof of process.”

This includes evidence of how academic work was created, for example:

- document version history;

- saved drafts;

- research notes;

- prompt logs;

- outlines and brainstorming notes.

These proofs show how ideas developed over time.

I often tell my pupils to think of writing as a process. Keep notes, save drafts, and write down how your ideas alter as you do research.

This documentation safeguards you against being accused of cheating in school if questions arise later.

Tools That Help Students Write in a Responsible Way

When you use digital tools carefully, they can help you learn. They help you write better and arrange your thoughts better. But they should never take the place of the student’s own thoughts or writing.

Here are the technologies that most researchers find to be the most useful:

- Grammarly. This tool can help with grammar, clarity, and tone. It works like a smart proofreader, suggesting better ways to say things without having to rewrite the whole thing.

- Notion AI. It helps you organize your research notes, plan, and generate new ideas. Many students use it to keep track of big projects and make outlines.

- Perplexity. This tool helps students find information, learn about different topics, and write short summaries of long texts. It can make doing research faster and easier.

- Colleges’ AI Platforms. Many schools now have their own systems that protect university data and meet strict security standards. These tools are safer to use for schoolwork.

University AI policy news today is about how colleges are making sure that students can use them safely and responsibly.

A Few Last Thoughts

AI will continue to change higher education. The goal of modern university AI policies is not to stop technology, but to help people use it safely.

As a teacher, I remind my students of three habits that will help them in school and beyond:

- Be honest about how you use smart technologies.

- Don’t forget to keep your private information and data safe.

- Remember to focus on learning instead of taking shortcuts.

If you follow these rules, you’ll do well in college and in your future job.

AI is a great tool, but real learning still depends on curiosity, hard work, and honesty!

- Chan, C. K. Y. (2023). A Comprehensive AI Policy Education Framework for University Teaching and Learning

- Bittle, K., & El-Gayar, O. (2025). Generative AI and Academic Integrity in Higher Education: A Systematic Review and Research Agenda

- Cotton, D., Cotton, P., & Shipway, J. (2024). The Rapid Rise of Generative AI and Its Implications for Academic Integrity

- Morari, V., Grimes, D., & Hawe, D. (2025). Academic Integrity and Generative Artificial Intelligence – Views and Perceptions of Students in an Irish University